HetSyn

本文最后更新于:13 分钟前

# 学术生涯总算留下了一点痕迹

咳咳,时隔多年突然想起来自己还有个博客

第一次投稿居然中了!!!本来想写点,提笔时却不知道说什么了,总之就是欢迎大家围观我们的工作

We are pleased to present our work, “HetSyn: Versatile Timescale Integration in Spiking Neural Networks via Heterogeneous Synapses”.

# Motivations & Contributions

Motivations

- Spiking Neural Networks (SNNs) offer a biologically plausible and energy-efficient computing paradigm characterized by sparse, event-driven signaling and intrinsic temporal processing capabilities.

- Synaptic heterogeneity, which is widely observed across brain regions and cell types, has been largely overlooked in the design of SNNs, and its computational potential remains under explored.

Contributions

- We propose HetSyn, the first modeling framework to explicitly explore synaptic heterogeneity in SNNs.

- We demonstrate that HetSyn serves as a unified and extensible framework, capable of representing a wide range of existing spiking neuron models.

- We instantiate HetSyn as HetSynLIF and demonstrate its effectiveness across multiple temporal tasks.

# Methods

# Vanilla LIF

The vanilla Leaky Integrate-and-Fire (LIF) neuron, where all inputs share the same membrane time constant, is defined using the following differential equation:

In practice, computer simulations typically use a discrete-time formulation, and a spike is emitted by a Heaviside step function when the membrane potential exceeds a threshold.

# Equipped with HetSyn

In HetSyn, we replace the uniform time constant with synapse-specific decay factors and model the reset process as a negative current injection. Each synaptic current now evolves independently over time, allowing the neuron to integrate information at multiple timescales:

# Generalization

HetSynLIF can generalize into three existing neuron types by adjusting parameters:

- If all synaptic decay factors and the reset current decay factor are identical and equal to a shared value , then the HetSynLIF model reduces to the HomNeuLIF model;

- Based on this, if we further decompose the reset current into a standard component and an additional adaptation current , then HetSynLIF generalizes to the HomNeuALIF model;

- For each post-synaptic neuron , if all equals to equals to ,the HetSynLIF model generalizes to the HetNeuLIF model.

# Experiments

We demonstrate that HetSynLlF not only improves the performance of SNNs across a variety of tasks, but also exhibits strong robustness to noise, enhanced working memory performance, and efficiency under limited neuron resources.

Performance on Pattern Generation Task

In pattern generation task, HetSynLIF learns long-term temporal dependencies faster and more accurately, and shows strong robustness to noise.

Performance on Delayed Match-to-Sample Task

In delayed match-to-sample task, HetSynLIF demonstrates excellent working memory performance, even with limited neuron resources.

Performance on Speech Recognition

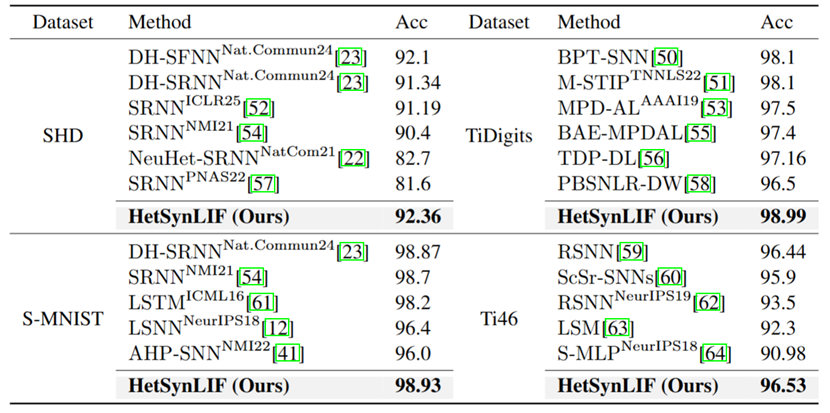

Accuracy Comparison on Four Datasets

In addition, the HetSynLIF model demonstrates outstanding performance on the other three datasets as well, consistently outperforming prior methods, highlighting its effectiveness in processing multi-timescale temporal dynamics across both speech and visual recognition tasks.

# Conclusion

In summary, HetSyn brings synaptic heterogeneity into SNN modeling and achieves versatile timescale integration. We believe this framework opens new directions for building more efficient and brain-inspired learning systems.

本博客所有文章除特别声明外,均采用 CC BY-SA 4.0 协议 ,转载请注明出处!